White Paper

The Role of Medical Affairs in Times of AI

This white paper explores the role and path forward for medical affairs as AI reshapes how individuals access and interpret scientific information.

AI is here. What does that mean for medical affairs?

With nearly 70% of physicians using AI daily, it has emerged as a primary tool for increasingly time-constrained healthcare professionals (HCPs) seeking scientific information. Usage is only poised to grow as a new generation of physicians, trained in the digital age, views AI as an integral component of how they get their work done.

As AI continues to rapidly change physicians’ behavior, medical affairs faces three critical questions:

- Will HCPs still need medical affairs for scientific information?

- How can medical affairs ensure AI tools ingest proprietary data and share it accurately and reliably?

- If an HCP trusts an AI tool but gets misinformation that causes a detrimental effect, who is liable?

Current use of AI among physicians

Source: The 2025 Physicians AI Report

The challenge is not whether AI will be used, but rather, what role will medical affairs play as AI reshapes how individuals access and interpret scientific information. In this white paper, we explore the path forward for medical affairs and the necessary shift toward a dual approach that centers on:

- The science: Take proactive responsibility for data accessibility in the digital ecosystem, making the right information available to stakeholders and the tools, platforms, and channels they use to find it.

- The relationship: Protect and strengthen the irreplaceable human connection of medical affairs, emphasizing the one-to-one relationship and mutual value created through deep scientific collaboration between biopharma and healthcare.

The opportunity is significant: Organizations that respond intentionally to this new mandate will strengthen relevance, trust, and influence. Those that don’t, stand to lose all three.

Where generative AI and medical affairs converge

At their core, both generative AI (GenAI) and medical affairs disseminate knowledge, and to some extent, are interpreters of evidence. This has introduced a competitive dynamic, not because AI and medical affairs serve identical purposes, but because they increasingly address the same job to be done. Therefore, the first and most immediate risk is that HCPs may stop coming to medical affairs for information.

Access to data, experts, and curated evidence forms the foundation of scientific exchange. Previously, human-bound constraints like availability, effort, and turnaround time often limited access. GenAI changes this, removing the friction points that traditionally governed data dissemination.

With this new ease of access comes a shift in perceived value. If time-constrained HCPs get answers that satisfy them at their first point of need, then the threshold for engaging with medical affairs fundamentally changes.

This is the earliest signal of a burning platform that has quietly taken shape, where maintaining the status quo carries more risk than change.

Burning platforms are rarely obvious in real time — more often they’re recognized in hindsight, after the environment has already changed. The impact isn’t always immediately visible or stark, making it difficult to grasp a real sense of urgency to respond.

For medical affairs, this shift has gone unseen primarily due to the way AI entered the enterprise conversation. Across industries, and particularly in life sciences, leaders have mainly focused on the inward-facing view of AI as a tool for operational gain. How can it help cut costs, simplify operations, and drive productivity across the business?

While these efforts are necessary and exciting, they only represent one side of the story. The other side — the outward-facing view — is just as important to consider. From it comes a second underlying risk that precedes the behavioral shifts we’re seeing with AI adoption across healthcare. It’s more structural, and arguably even more consequential, because it affects public health discourse and patient safety at large.

The information blind spot: What’s training AI?

Most AI models are primarily trained on public data, possibly missing critical information like proprietary data from biopharma companies that medical affairs stewards. This brings an inevitable risk that when an HCP or a patient prompts the tool, the tool gets it wrong. It believes it is right because it doesn’t know what it doesn’t know.

Synthesizing conclusions without access to the full body of relevant data or on incomplete, outdated, or even secondary interpretations of evidence creates a blind spot that contributes to a growing challenge: the spread of misinformation and disinformation.

Several leading global organizations have also directly addressed the issue as it relates to public health:

- The World Health Organization has warned that health misinformation and disinformation is a major threat to global health.

- The European Parliament identifies health disinformation as a systemic, ongoing threat to public health and democracy.

- The World Economic Forum cites misinformation and disinformation as the top short-to-medium term global risk.

- ECRI cites wide availability and viral spread of medical misinformation as the top 3 patient safety concern.

Source: World Health Organization, Review (2024);European Parliament Study (2024);World Economic Forum, The Global Risks Report 2025 (2025);ECRI, Top 10 Patient Safety Concerns 2025 Report (2025).

While AI did not create this problem, it accelerates the impact. When medical affairs’ input is absent from the digital ecosystems that shape AI output, it creates a vacuum filled by secondary and often non evidence-based means.

The challenge for medical affairs today is how to remain the trusted scientific authority to healthcare in an environment where AI increasingly mediates scientific understanding. In the larger ecosystem of public health, AI is just as important to the solution.

Using core strengths to meet new realities

Medical affairs has a new mandate to reposition itself by extending its core strengths to meet new realities. This requires a dual commitment.

- The science: When it comes to information access, scientific stewardship in digital spaces must become intentional and proactive. That means ensuring accurate, evidence-based scientific information is more accessible not only to the stakeholders — but to the tools, platforms, and channels they are using to find it.

- The relationship: Medical affairs maintains the advantage of one-to-one relationships: knowing exactly who to talk to and cultivating those relationships through coordinated activities. The biopharma industry has spent decades anchoring to an engagement model that meets the stakeholder at eye level. This remains an invaluable differentiator for medical affairs, but it can and must evolve. Let’s look at each core strength in more detail and explore the actions medical affairs can take to get there.

The science: Improving accessibility in a digital-first environment

Medical affairs is responsible not only for the quality of evidence, but for how evidence is represented, contextualized, and interpreted. Should medical affairs have this responsibility in an AI-mediated environment too?

Improving accessibility in the digital space is about making information discoverable — anytime, anywhere. That requires both operational discipline and content evolution.

Consider the following questions:

- How often does a Gemini summary cite your evidence?

- Does OpenEvidence correlate your real-world evidence with the standard of care?

- If you ask ChatGPT or Claude about the scientific position of a given product, does its answer align with your company’s?

Model optimization: Why machine readability matters

Thinking beyond a document-based approach to data and content management helps medical affairs influence how scientific information shows up in AI-mediated spaces. The shift focuses on the technical mechanics that allow machines to discover, interpret, and cite evidence with the same nuance as a human expert.

Before AI-powered search, the standard for digital content visibility was search engine optimization (SEO). The focus was on specific techniques to improve how content ranks in search engine results pages (SERP).

AI is changing that model. Instead of optimizing for high SERP rank, the primary goal now is to ensure AI cites or references your data, studies, or content. This practice of generative engine optimization (GEO) builds on SEO by optimizing for generative models using approaches that make content easier for AI systems to understand, trust, and reuse.

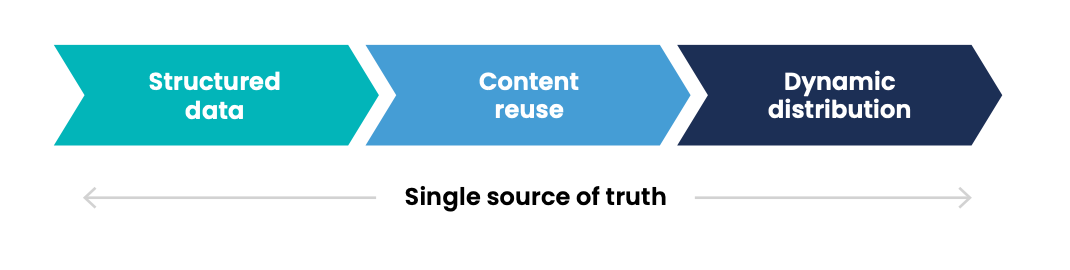

GEO optimizes for comprehension and citation — rather than clicks — and requires:

Digital strategies must fundamentally change to accommodate what AI engines think is quality, relevant information to surface. Think about it as a shift from digital content to machine-readable science.

Machine-readable science requires content evolution

Machine readability is critical to support an AI-enabled content supply chain. In this context, content evolution optimized for an AI ecosystem is a win-win.

Structured data

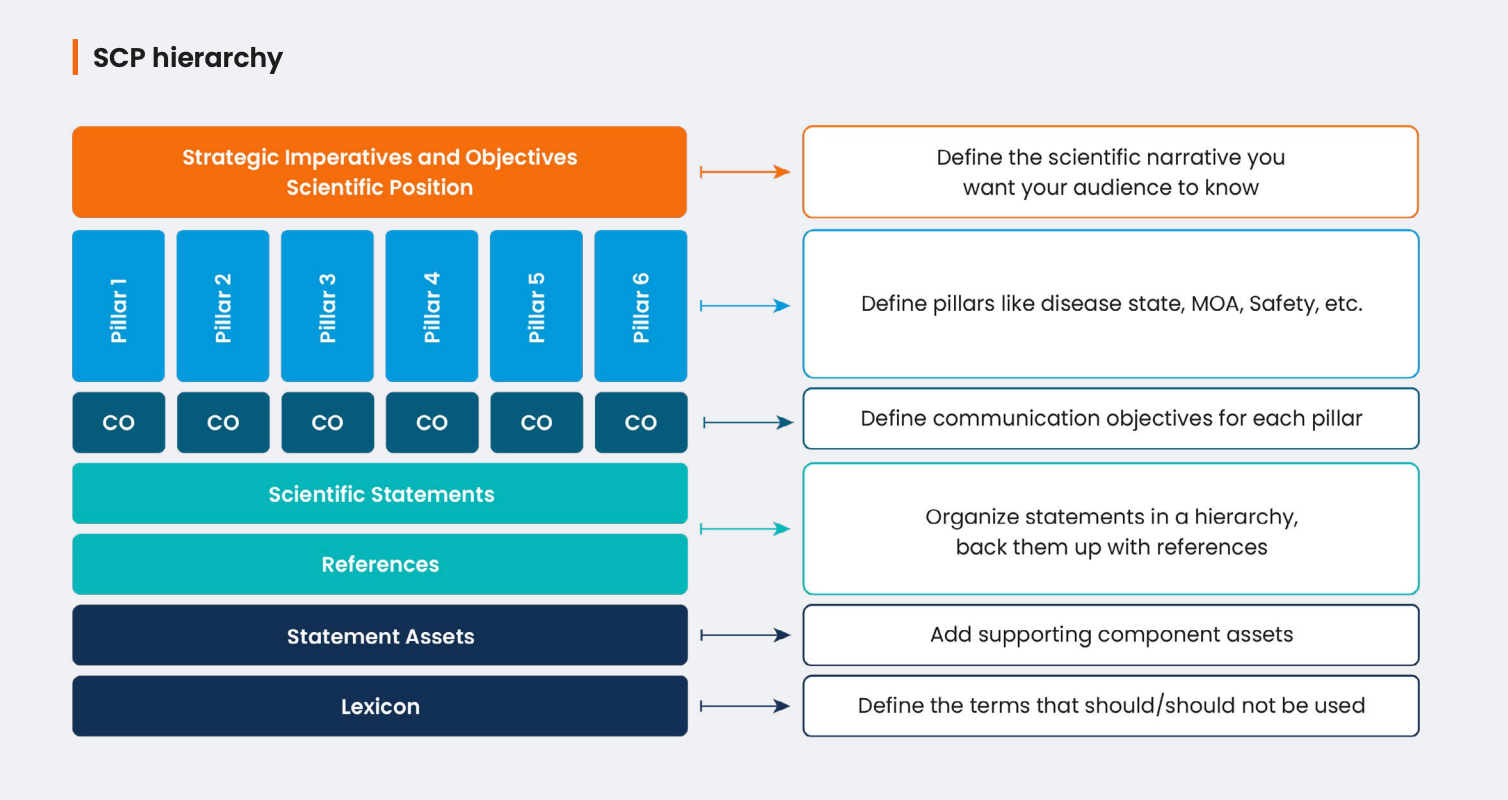

Models need structured inputs to interpret concepts that should ground its outputs. Fortunately, medical affairs already has an advantage. The core information to communicate has a set structure already defined in a scientific communication platform (SCP). But in this context, it’s about managing the information itself rather than a document that contains it.

These structured components give AI the context it needs to understand:

- What a scientific statement is

- How it relates to other concepts

- What evidence supports it

But what happens when that context changes? Perhaps an MSL identified an education gap in the field and you recently published new evidence that closes it. Updating the component updates the context — accurately and consistently — across content and channels.

Content reuse

Breaking down information into format-free components allows reuse in different contexts. By tagging information with metadata — data that describes other data — systems then can identify and interpret that information when used in content.

This approach links content to structured components that teams have already reviewed and approved. If an individual asset needs to be tailored for a specific audience or localized for a specific market, for example, structure enables reuse to support these needs. This approach ensures content always reflects the approved scientific truth.

Dynamic distribution

Dynamic distribution means delivering the right information at the right moment. Information is not fixed to a single format or channel but is ‘living and breathing,’ optimized for both AI model retrieval and human consumption.

Instead of static, one-off publishing, teams can deliver information dynamically and make it discoverable across push and pull channels. For example:

- Push to field teams when new evidence surfaces

- Pull through search or an agent chat in response to a medical inquiry

Information delivered in these contexts stays accurate and consistent. Structure enables traceability, tying external usage of information back to the system from which it originated. This creates visibility into usage that can help inform future strategy.

The relationship: Improving scientific exchange with deeper dialogue and debate

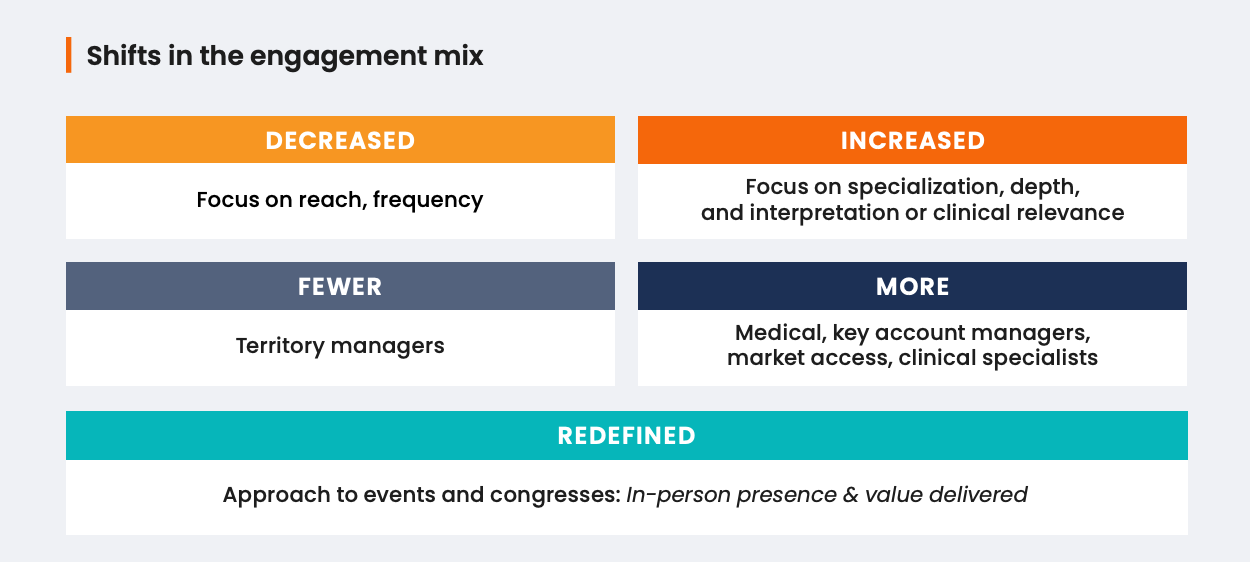

As AI increasingly becomes the first touchpoint for knowledge-seeking HCPs, relevance and influence in biopharma engagement will depend more on specialization in the field.

The reimagined MSL: Value beyond the LLM

HCPs and KOLs want instant access to information and personalized interaction. As AI becomes a frontline tool, MSLs will differentiate themselves through their expertise, perspective, and deep scientific collaboration.

Expertise is the depth of your knowledge. MSLs can provide information, but the real benefit is the context and insights they bring to the conversation.

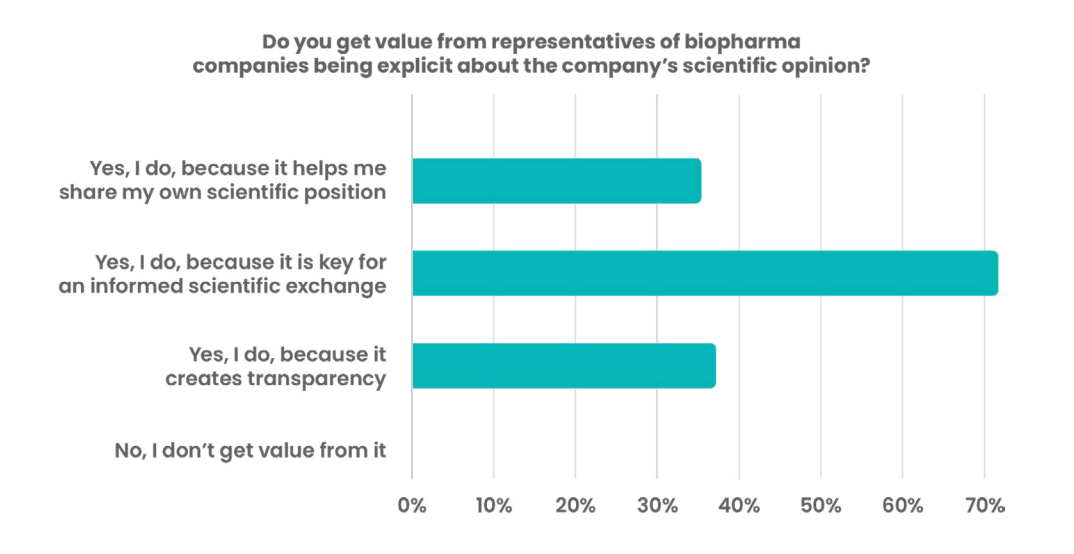

Perspective is the angle from which you apply knowledge. For MSLs, that means clearly articulating how the organization interprets and contextualizes the total body of evidence, what scientific position it holds, and how it believes that evidence should or should not translate into clinical practice. They must be willing to defend that position, debate it, and refine it, if and where necessary.

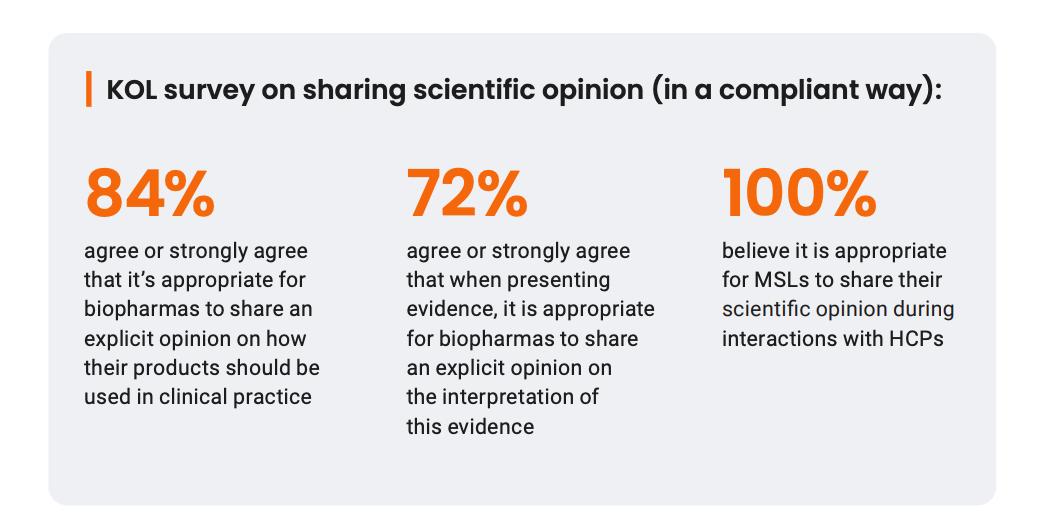

Research shows that KOLs highly value this type of exchange with MSLs:

Source: KOL Satisfaction Survey

Best practices for sharing a scientific opinion:

- Disclose all underlying evidence

- Maintain a fair and balanced view

- Steer clear of marketing language

- Use scientific argumentation

Deeper scientific collaboration focuses on the breadth of mutual value through one-to-one relationships, which AI cannot replicate. This includes distinct elements:

-

Stakeholder ownership

Ownership starts as early as segmentation and targeting. This includes finding exactly who you want to talk to, getting to know their interests, preferences, and needs, and maintaining the relationship over time through coordinated activities. LLMs are simply not built to engage with this level of sophistication.

-

Scientific debate

Another distinct but somewhat overlooked source of shared value is in scientific debate. Scientific disagreement is a critical source of progress that machines cannot simulate because they lack the context to ‘care’ about being wrong.

If an HCP disagrees with what AI presents, the HCP is not likely to engage in a debate with the agent. By contrast, when an HCP challenges a scientific position an MSL presents during an interaction, they will likely engage in debate. That tension is often a vital source of scientific learning, delivering critical insights for the organization.

-

Insights sharing

The irreplaceable human connection is arguably most evident when it comes to insights. AI is a useful tool for surfacing insights, but the exchange comes from the dialogue itself.

KOLs share, on average, nine insights per year across a broad range of topics, with some KOLs sharing as many as 60 insights with companies they’re engaging with. While these insights are extremely valuable to biopharmas, data shows there is room for improvement.

Medical affairs has made insights collection a core priority, yet many organizations struggle to trace how this data is used to change medical strategy or inform future activities intended to close clinical care gaps. Operational improvements will be the defining element of transformation going forward.

Holistic transformation will deliver measurable impact

AI does not diminish the importance of medical affairs but does change the standard for creating value. This is especially true when it comes to how medical affairs is working toward delivering measurable impact. Strengthening the operational foundation ensures key enablers can work together to drive the desired outcomes: scientific belief alignment, clinical practice optimization, and ultimately improved patient outcomes.

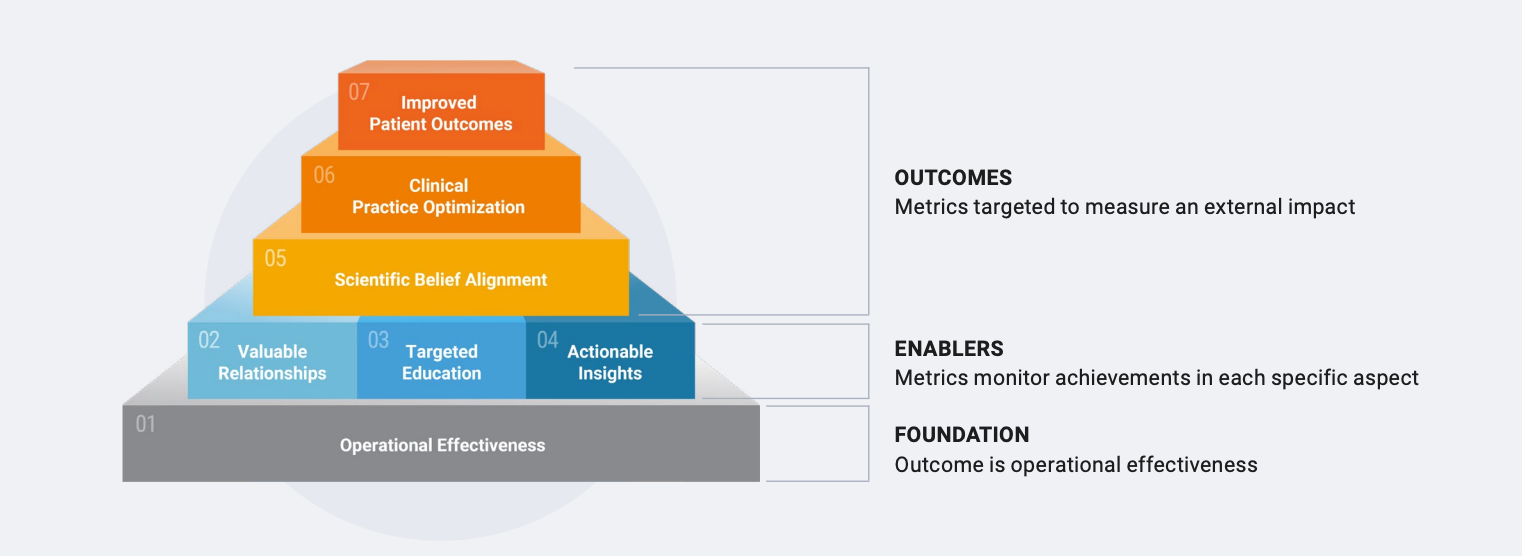

The Medical Impact Model

The medical impact model is a framework to structure exchange on the intricate topic of measurement. Every module stands for a desired outcome and how medical affairs teams can approach measuring each.

But biopharmas cannot demonstrate impact if the solution to every challenge is applied in isolation. Holistic transformation is the only path forward, shifting focus from “what tools do we deploy” to “what outcomes can our operating model enable?”

The path forward: Unify the medical affairs operating model

AI will not replace humans, nor will it replace the core applications they use. In fact, it will increasingly depend on both. Because AI is systematic by nature, it doesn’t distinguish between internal and external environments. It learns continuously from the information it’s given and the systems that enable it. That means AI is only as effective as the:

- Quality and structure of the data it learns from

- Consistency in the processes that govern it

- Strength of the underlying platforms that support it

For medical affairs, AI changes the mandate but not the fundamentals. Investment in the most sophisticated AI tool will never compensate for an ecosystem that isn’t built to support it.

Strengthening underlying data and technology foundations may not be the flashiest investment, but it is critical work. It’s what enables AI to work safely, reliably, and consistently at scale — regardless of where or how it’s being applied. In this context, the limitations of point solutions become more acute.

AI depends on continuity — consistent data structures, shared context, and connected workflows. Point solutions are not built to support this. Accumulating a collection of tools designed to work in isolation fragments the ecosystem, reinforces silos, and places the burden of coordination on people rather than systems:

- Teams spend time stitching together information that should already be connected

- Evidence moves through the content lifecycle with no reliable chain of custody

- Engagement activities lack coordination and any potential insights derived from them slip through the cracks

You don’t have to reinvent these activities to effectively orchestrate them. Build a foundation that creates continuity from where the science takes shape to where relationships create meaningful value and insights in the field.

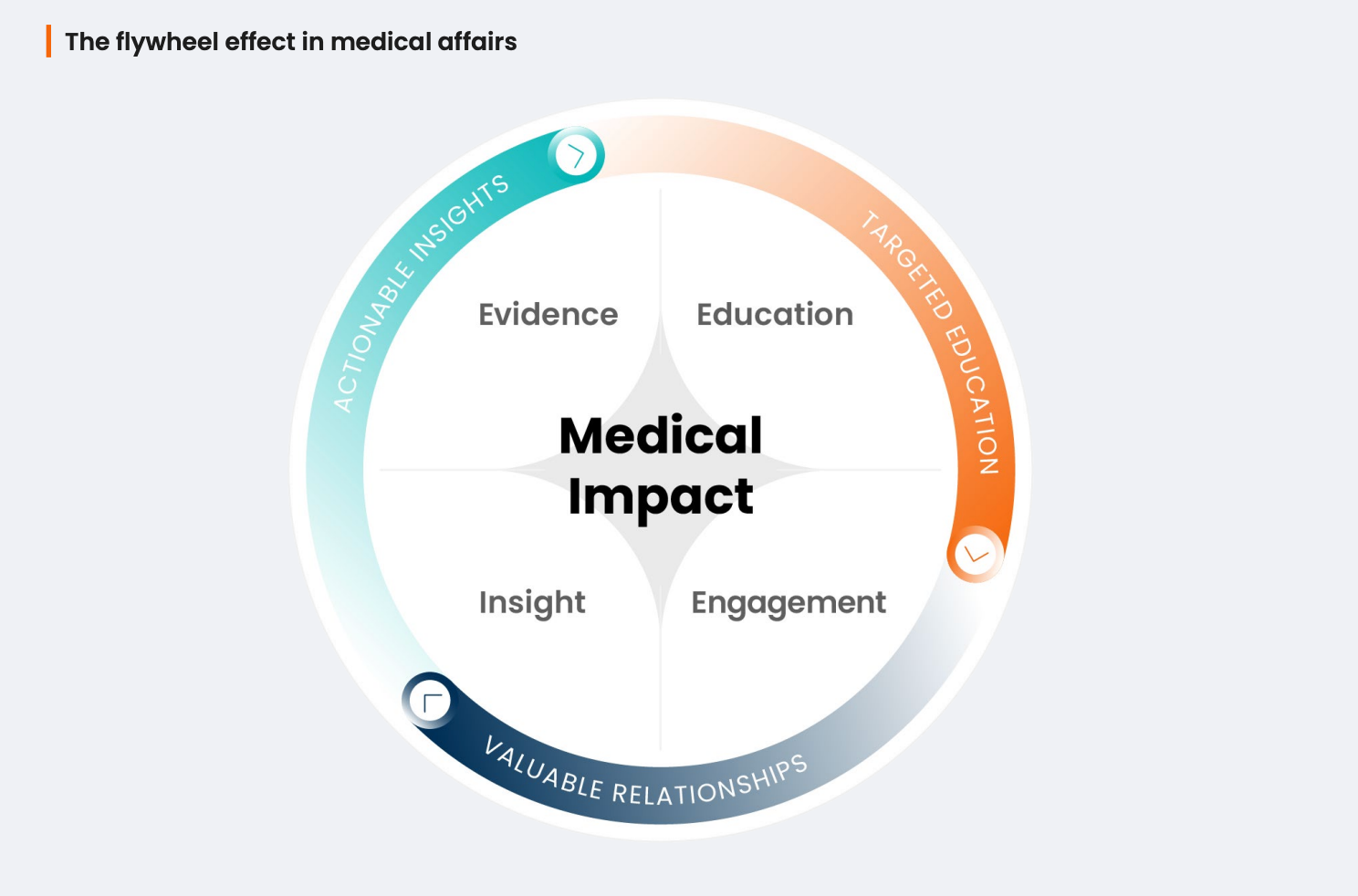

When these pieces connect, they start reinforcing each other. Medical affairs operates in a coordinated motion, with AI becoming a natural enabler to accelerate impact across the full cycle of work.

Investing in effective change management

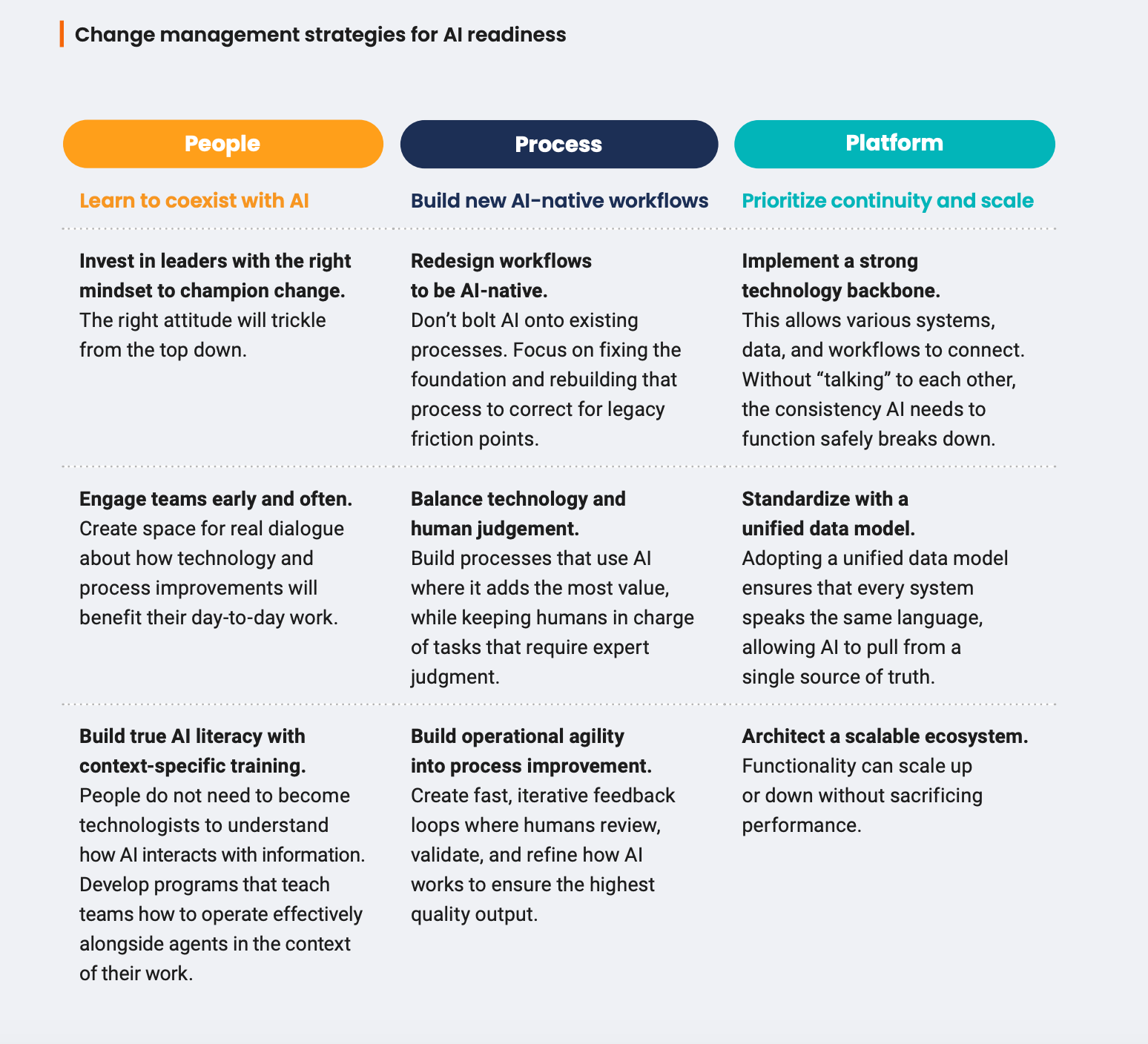

AI and other technology does not create impact on its own. Impact comes from an entire organization willing to rethink how work gets done and commit to making the required changes.

Effective change management aligns people, processes, and platforms around shared outcomes. Once you’ve assessed where and how to leverage AI to support those goals, you can turn your attention toward organizational readiness. Here are some ways to get started.

THE BOTTOM LINE

AI changes what’s possible, but medical affairs decides what’s next

Organizations that embrace holistic transformation will create an environment for change to flourish — no matter how much or how fast technology evolves. AI has brought a fundamental change in how society engages with information. Within life sciences and across the healthcare ecosystem, it’s important to remember that this change is driven by the ultimate goal of improving patient care.

See Veeva's end-to-end medical solutions in action.